You can use the Shopify Export Integration connector to export Metafield data to Shopify.

- Basic knowledge of Treasure Data

- Basic knowledge of Shopify

- Result output schema must match require columns(name & data type) with resource

To obtain Shopify Credentials, complete the following steps:

- Sign up at https://www.shopify.com/ to create an online store for the user.

- Enter the details about the user.

- Enter additional details about the business.

- Create a custom app.

- The user can see and use the API credentials for the store and can connect with different external applications. This API key is required to create a connection to Treasure Data.

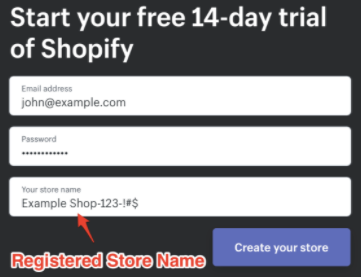

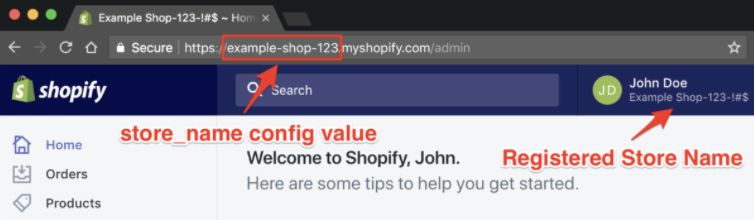

Shopify translates your free-form Store Name into a URL-friendly value. It truncates special characters and replaces spaces with hyphens as shown in this example:

Example Shop-123-!#$ becomes example-shop-123

You need to use the translated value (in Admin URL, after signing in):

In Treasure Data, you must create and configure the data connection, to be used during export, prior to running your query. As part of the data connection, you provide authentication to access the integration.

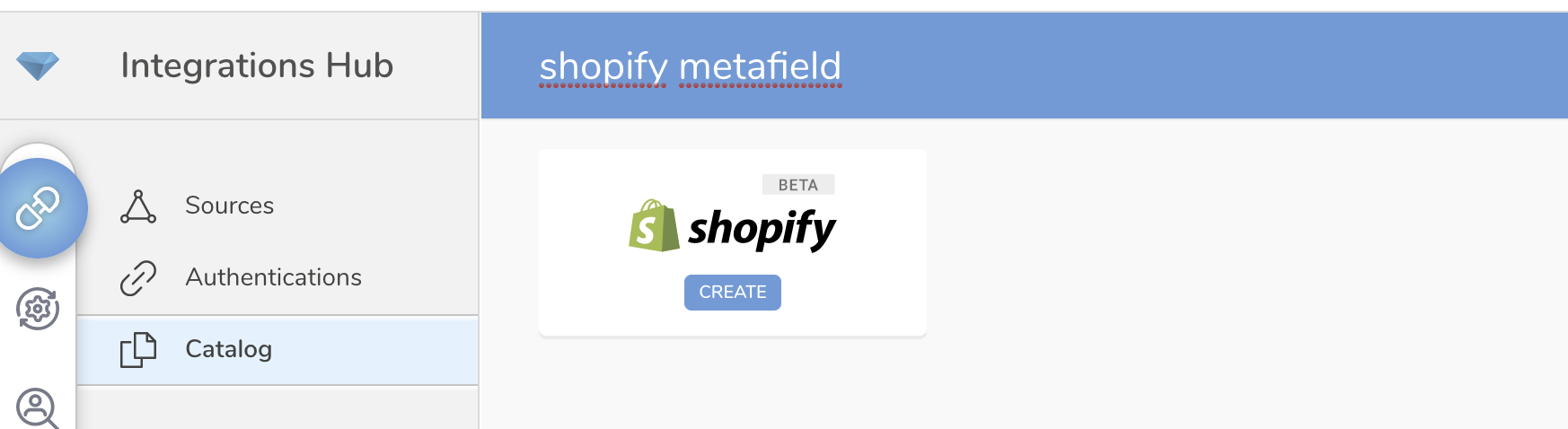

Open the TD Console.

Navigate to Integrations Hub > Catalog

Search for Shopify Metafield. Select Create.

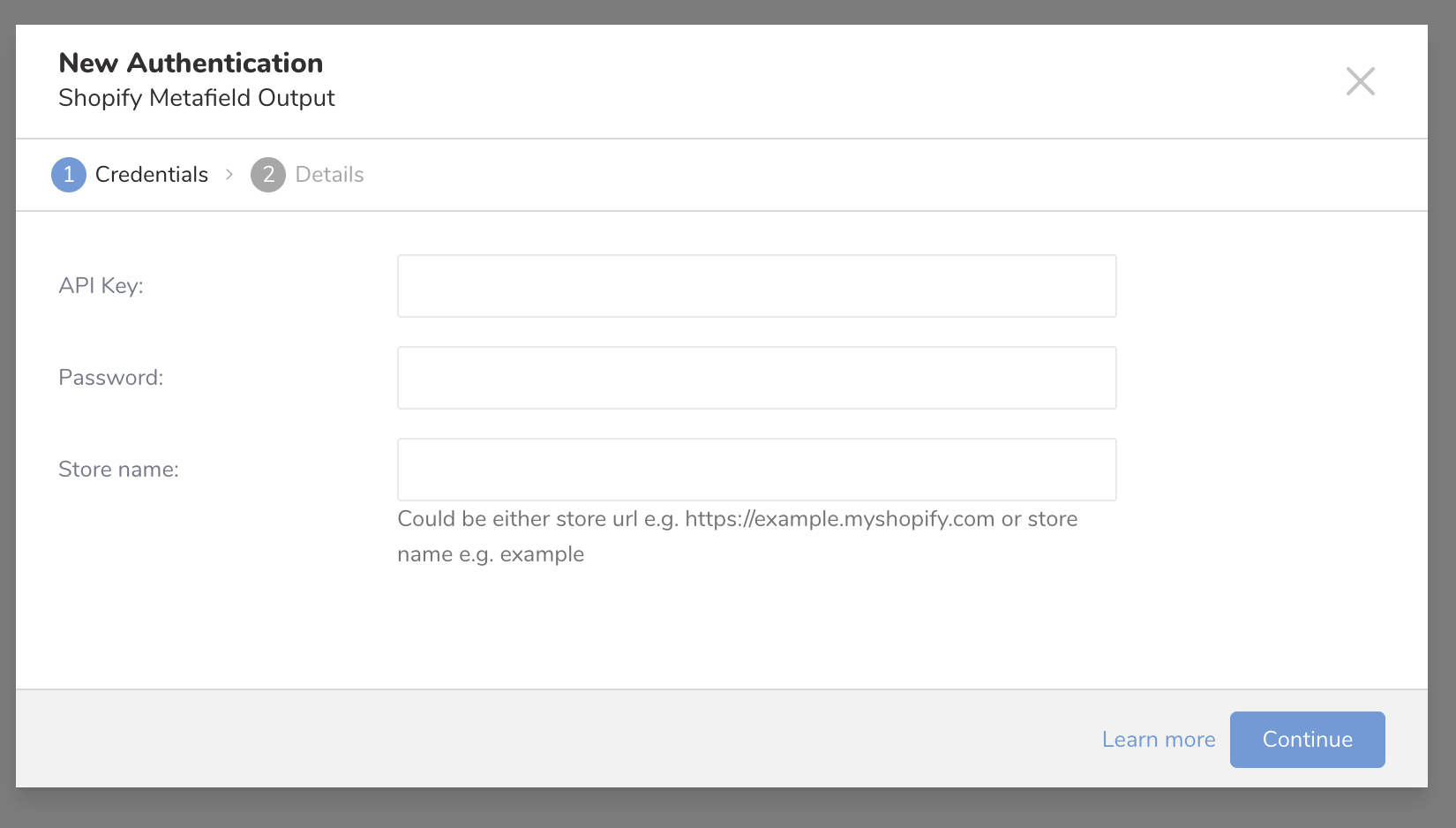

The following dialog opens. Complete the required fields and then select Continue. Check Admin API access tokenin Shopify console for Password value.

Enter a name for your connection.

Select Done.

In this step you create or reuse a query. In the query, you configure the data connection.

You sometimes need to define the column mapping in the query.

- Open the TD Console.

- Navigate to Data Workbench > Queries.

- Select the query that you plan to use to export data.

Each type of resource requires specific column and column data type.

| Action | Resource | Column require | Query | Note |

|---|---|---|---|---|

| Create | Shop | [key: String, namespace: String, value: any] | SELECT key, namespace, value FROM table | |

| Create | Product | [product_id: Integer, key: String, namespace: String, value: any] | SELECT product_id, key, namespace, value FROM table ORDER BY product_id | Please use ORDER BY to increase performance |

| Create | Product Variant | [variant_id: Integer, key: String, namespace: String, value: any] | SELECT variant_id, key, namespace, value FROM table ORDER BY variant_id | Please use ORDER BY to increase performance |

| Create | Product Image | [product_id: Integer, image_id: Integer, key: String, namespace: String, value: any] | SELECT product_id, image_id, key, namespace, value FROM table ORDER BY product_id, image_id | Please use ORDER BY to increase performance |

| Create | Custom Collection | [custom_collection_id: Integer, key: String, namespace: String, value: any] | SELECT custom_collection_id, key, namespace, value FROM table ORDER BY custom_collection_id | Please use ORDER BY to increase performance |

| Create | Smart Collection | [smart_collection_id: Integer, key: String, namespace: String, value: any] | SELECT smart_collection_id, key, namespace, value FROM table ORDER BY smart_collection_id | Please use ORDER BY to increase performance |

| Create | Customer | [customer_id: Integer, key: String, namespace: String, value: any] | SELECT customer_id, key, namespace, value FROM table ORDER BY customer_id | Please use ORDER BY to increase performance |

| Create | Order | [order_id: Integer, key: String, namespace: String, value: any] | SELECT order_id, key, namespace, value FROM table ORDER BY order_id | Please use ORDER BY to increase performance |

| Create | Draft Order | [draft_order_id: Integer, key: String, namespace: String, value: any] | SELECT draft_order_id, key, namespace, value FROM table ORDER BY draft_order_id | Please use ORDER BY to increase performance |

| Create | Blog | [blog_id: Integer, key: String, namespace: String, value: any] | SELECT blog_id, key, namespace, value FROM table ORDER BY blog_id | Please use ORDER BY to increase performance |

| Create | Article | [blog_id: Integer, article_id: Integer, key: String, namespace: String, value: any] | SELECT blog_id, article_id, key, namespace, value FROM table ORDER BY blog_id, article_id | Please use ORDER BY to increase performance |

| Create | Page | [page_id: Integer, key: String, namespace: String, value: any] | SELECT page_id, key, namespace, value FROM table ORDER BY page_id | Please use ORDER BY to increase performance |

| Update | [metafield_id: Integer, value: any] | SELECT metafield_id, value FROM table |

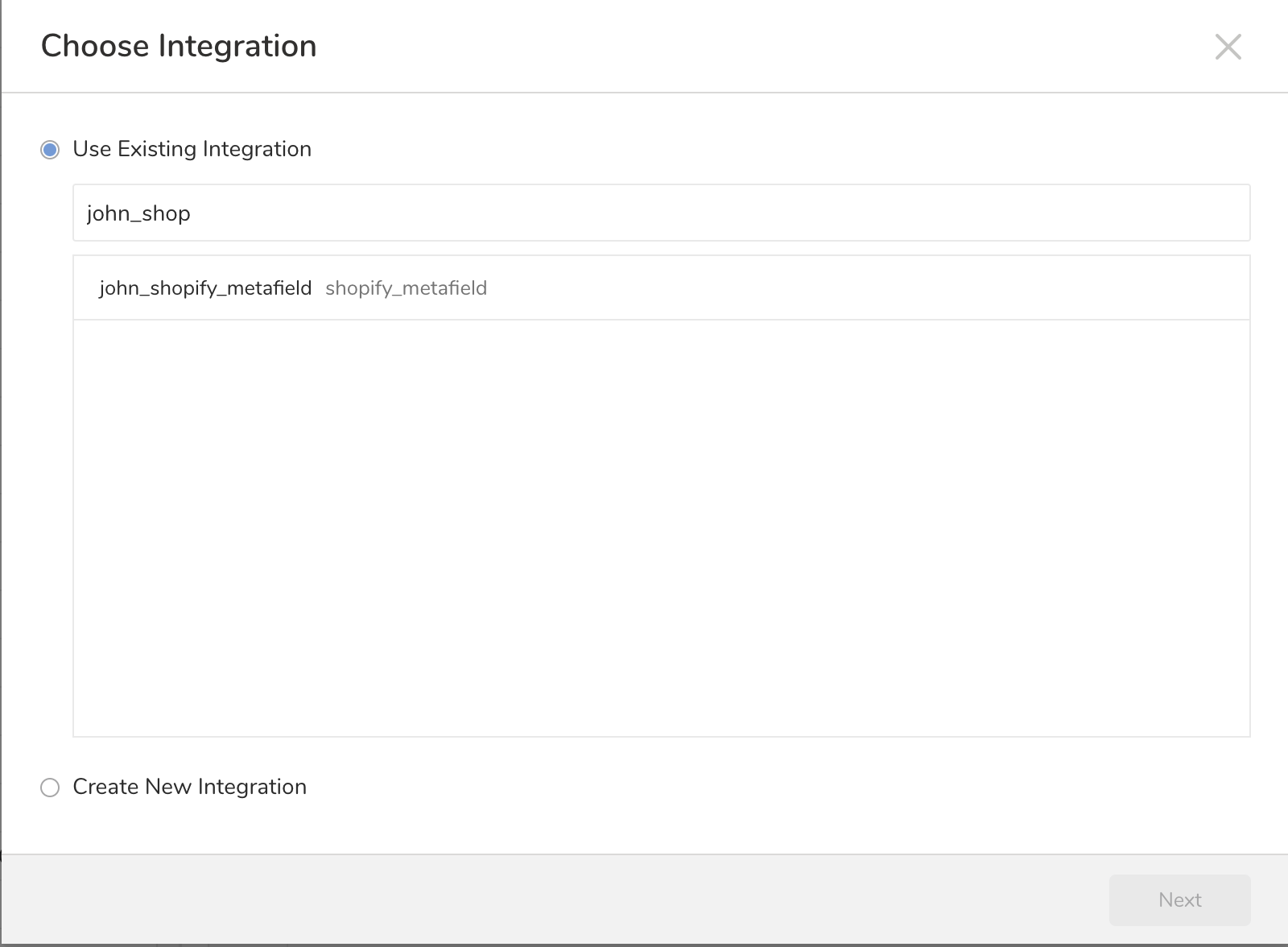

- Select Export Results located at top of your query editor. The Choose Integration dialog opens. You have two options when selecting a connection to use to export the results, using an existing connection or creating a new one.

- Type the connection name in the search box to filter.

- Select your connection. Select Next.

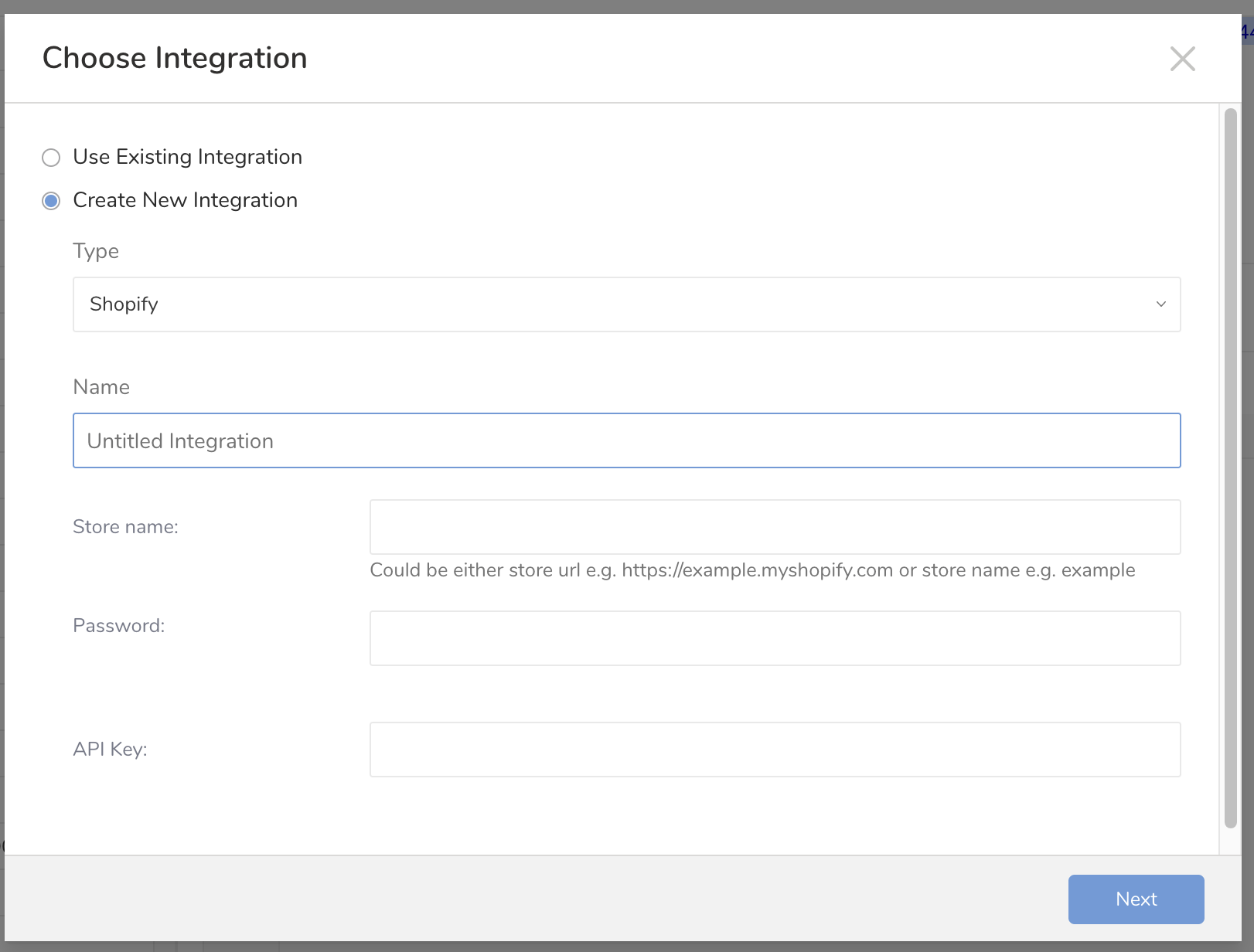

- Select Create New Integration.

- Choose the connection Type.

- Type a Name for your connection.

- Enter the Store Name, Password, and API Key**.**

- Select Next. The following dialog opens.

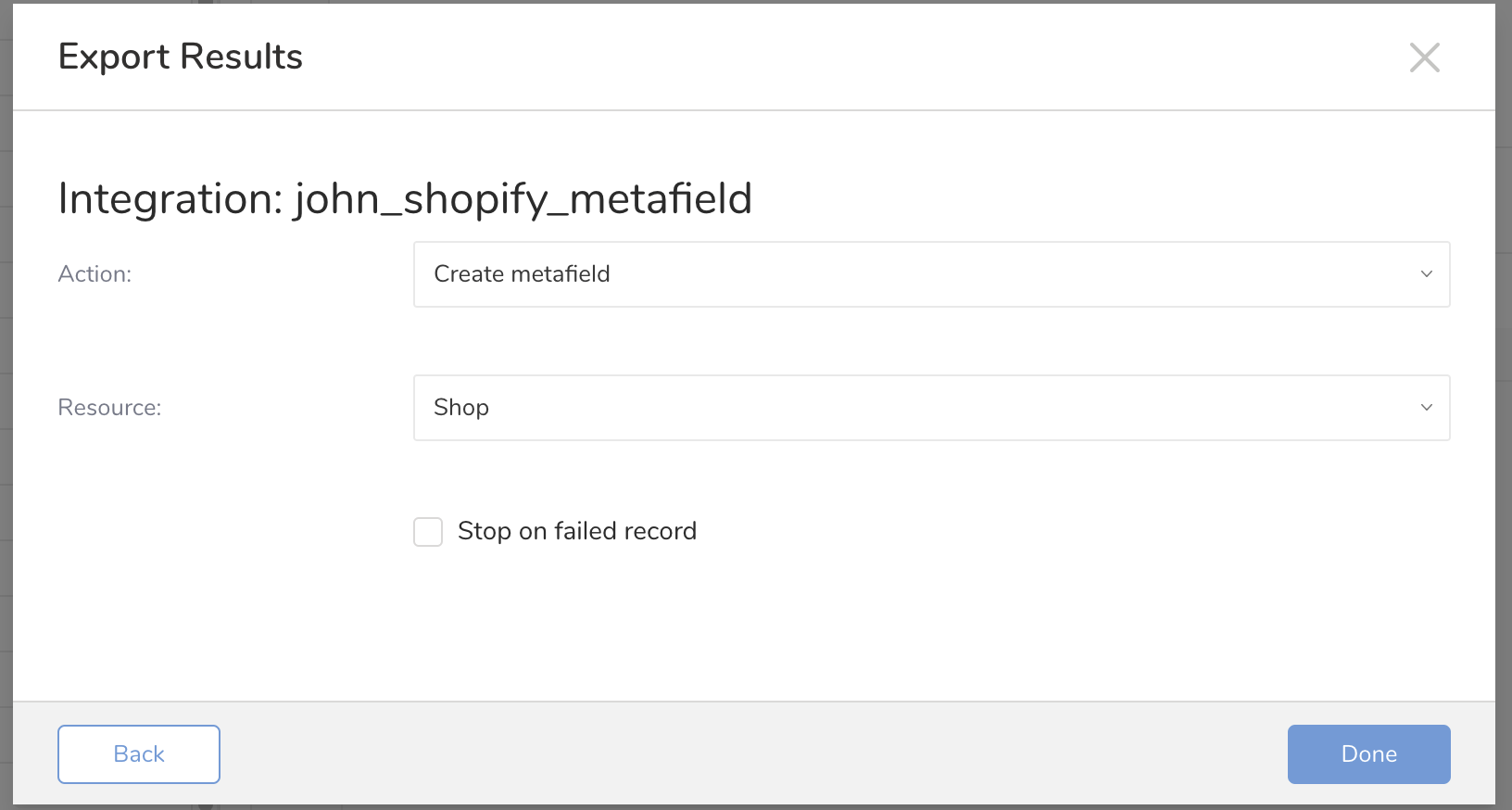

- Select an Action. If the action is to Create Metafield, select the resource to be created with the metafield.

- Select Stop on failed record if you want the job to stop when an error occurs.

- Select Done.

You can use Scheduled Jobs with Result Export to periodically write the output result to a target destination that you specify.

Treasure Data's scheduler feature supports periodic query execution to achieve high availability.

When two specifications provide conflicting schedule specifications, the specification requesting to execute more often is followed while the other schedule specification is ignored.

For example, if the cron schedule is '0 0 1 * 1', then the 'day of month' specification and 'day of week' are discordant because the former specification requires it to run every first day of each month at midnight (00:00), while the latter specification requires it to run every Monday at midnight (00:00). The latter specification is followed.

Navigate to Data Workbench > Queries

Create a new query or select an existing query.

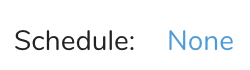

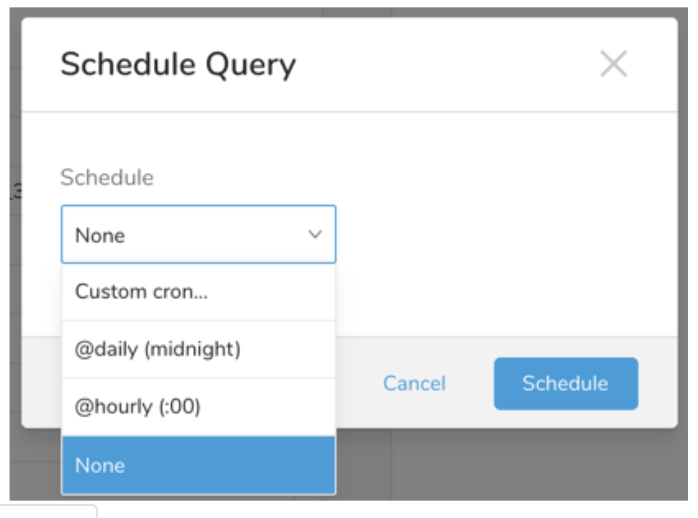

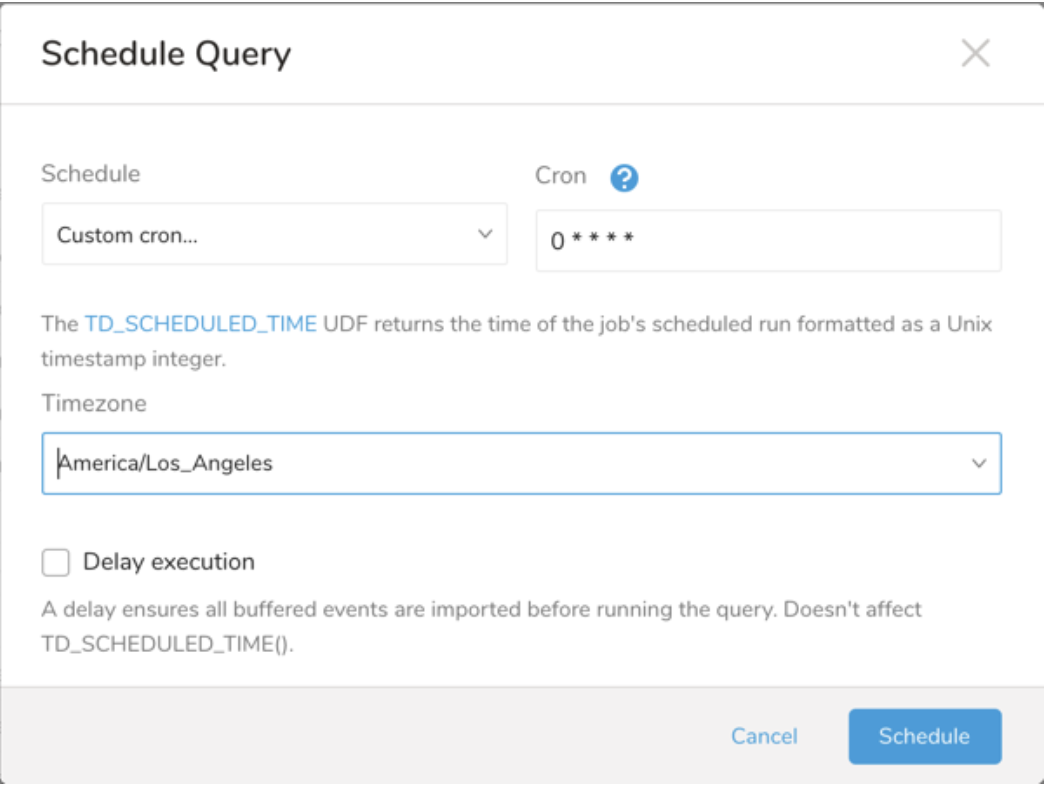

Next to Schedule, select None.

In the drop-down, select one of the following schedule options:

Drop-down Value Description Custom cron... Review Custom cron... details. @daily (midnight) Run once a day at midnight (00:00 am) in the specified time zone. @hourly (:00) Run every hour at 00 minutes. None No schedule.

| Cron Value | Description |

|---|---|

0 * * * * | Run once an hour. |

0 0 * * * | Run once a day at midnight. |

0 0 1 * * | Run once a month at midnight on the morning of the first day of the month. |

| "" | Create a job that has no scheduled run time. |

* * * * *

- - - - -

| | | | |

| | | | +----- day of week (0 - 6) (Sunday=0)

| | | +---------- month (1 - 12)

| | +--------------- day of month (1 - 31)

| +-------------------- hour (0 - 23)

+------------------------- min (0 - 59)The following named entries can be used:

- Day of Week: sun, mon, tue, wed, thu, fri, sat.

- Month: jan, feb, mar, apr, may, jun, jul, aug, sep, oct, nov, dec.

A single space is required between each field. The values for each field can be composed of:

| Field Value | Example | Example Description |

|---|---|---|

| A single value, within the limits displayed above for each field. | ||

A wildcard '*' to indicate no restriction based on the field. | '0 0 1 * *' | Configures the schedule to run at midnight (00:00) on the first day of each month. |

A range '2-5', indicating the range of accepted values for the field. | '0 0 1-10 * *' | Configures the schedule to run at midnight (00:00) on the first 10 days of each month. |

A list of comma-separated values '2,3,4,5', indicating the list of accepted values for the field. | 0 0 1,11,21 * *' | Configures the schedule to run at midnight (00:00) every 1st, 11th, and 21st day of each month. |

A periodicity indicator '*/5' to express how often based on the field's valid range of values a schedule is allowed to run. | '30 */2 1 * *' | Configures the schedule to run on the 1st of every month, every 2 hours starting at 00:30. '0 0 */5 * *' configures the schedule to run at midnight (00:00) every 5 days starting on the 5th of each month. |

A comma-separated list of any of the above except the '*' wildcard is also supported '2,*/5,8-10'. | '0 0 5,*/10,25 * *' | Configures the schedule to run at midnight (00:00) every 5th, 10th, 20th, and 25th day of each month. |

- (Optional) You can delay the start time of a query by enabling the Delay execution.

Save the query with a name and run, or just run the query. Upon successful completion of the query, the query result is automatically exported to the specified destination.

Scheduled jobs that continuously fail due to configuration errors may be disabled on the system side after several notifications.

(Optional) You can delay the start time of a query by enabling the Delay execution.

You can also send segment data to the target platform by creating an activation in the Audience Studio.

- Navigate to Audience Studio.

- Select a parent segment.

- Open the target segment, right-mouse click, and then select Create Activation.

- In the Details panel, enter an Activation name and configure the activation according to the previous section on Configuration Parameters.

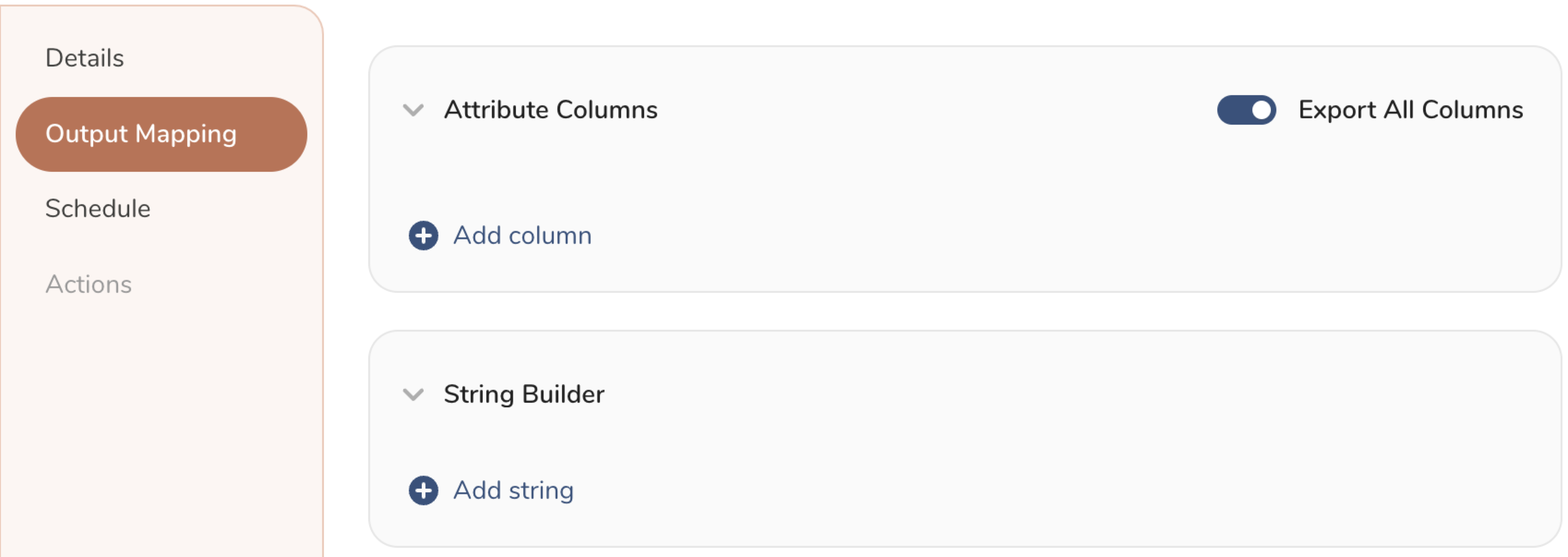

- Customize the activation output in the Output Mapping panel.

- Attribute Columns

- Select Export All Columns to export all columns without making any changes.

- Select + Add Columns to add specific columns for the export. The Output Column Name pre-populates with the same Source column name. You can update the Output Column Name. Continue to select + Add Columnsto add new columns for your activation output.

- String Builder

- + Add string to create strings for export. Select from the following values:

- String: Choose any value; use text to create a custom value.

- Timestamp: The date and time of the export.

- Segment Id: The segment ID number.

- Segment Name: The segment name.

- Audience Id: The parent segment number.

- + Add string to create strings for export. Select from the following values:

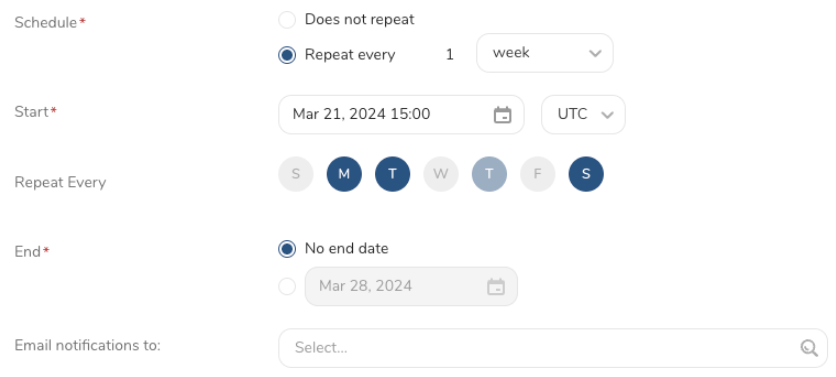

- Set a Schedule.

- Select the values to define your schedule and optionally include email notifications.

- Select Create.

If you need to create an activation for a batch journey, review Creating a Batch Journey Activation.

Create metafield

timezone: UTC

_export:

td:

database: sample_datasets

+td-result-into-target:

td>: queries/sample.sql

result_connection: your_connections_name

result_settings:

apikey: {apikey}

password: {password}

store_name: {store_name}

action: create

resource: shop

stop_on_failed_record: falseUpdate metafield

timezone: UTC

_export:

td:

database: sample_datasets

+td-result-into-target:

td>: queries/sample.sql

result_connection: your_connections_name

result_settings:

apikey: {apikey}

password: {password}

store_name: {store_name}

action: update

stop_on_failed_record: falseClick here for more information on using data connectors in workflow to export data.